PromptShield

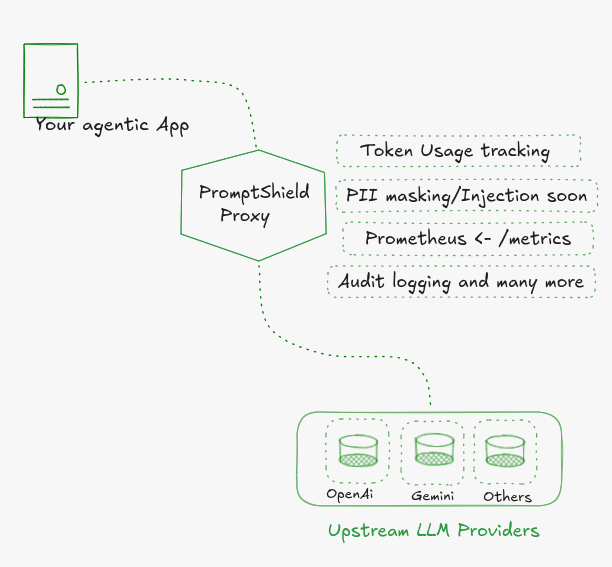

Open source LLM gateway. Scans every prompt and response for PII and secrets, enforces policy, and routes to any provider. Runs on your infrastructure.

Point your SDK at the proxy and it scans every prompt and response, enforces policy, and routes to your provider. No code changes.

What it catches

- Secrets: AWS keys, GitHub tokens, OpenAI keys, Stripe keys, Slack tokens, DB connection strings, private keys

- PII: email, phone, SSN, credit card, IBAN, passport, medical license (30+ types)

- Responses: scans LLM output before it reaches your app

Block or mask per entity type. Policy is a YAML file in your repo.

Built to deploy your way

Self-host it and your prompts never leave your servers. Full control, no vendor in the path. Works for regulated industries out of the box.

Need a managed setup or help getting started? Open a discussion.

Policy

| Action | Behavior |

|---|---|

block | HTTP 403. LLM never called. Zero tokens consumed. |

mask | PII replaced with [ENTITY_TYPE]. Sanitized prompt forwarded. |

allow | Passes through unchanged. |

warn | Logs and allows. Coming soon. |

Modes

Set PROMPTSHIELD_ENGINE_URL to connect the detection engine.

| Proxy | Proxy + Engine | |

|---|---|---|

| Rate limiting | ✓ | ✓ |

| Token tracking | ✓ | ✓ |

| Provider routing | ✓ | ✓ |

| Audit logs | ✓ | ✓ |

| Metrics | ✓ | ✓ |

| Request scanning | ✓ | |

| Policy enforcement | ✓ | |

| Response scanning | ✓ |